-

For many modalities, we can think of the data we observe as represented or generated by an associated unseen latent variable, which we can denote by random variable .

-

SOURCE: # Understanding Diffusion Models: A Unified Perspective

Evidence Lower Bound

- Mathematically, we can imagine the latent variables and the data we observe as modeled by a joint distribution .

- One approach of generative modeling, termed “likelihood-based”, is to learn a model to maximize the likelihood of all observed . Two ways to go about manipulate this joint distribution to recover the likelihood:

- Equation 1: marginalization:

- Equation 2: chain rule:

- Computing any of them is kinda hard

- However, using the two equations, we can lower-bound the likelihood, which would give us a good enough proxy objective to maximize. ⇒ ELBO

- is an approximate variational distribution of with parameters to optimize

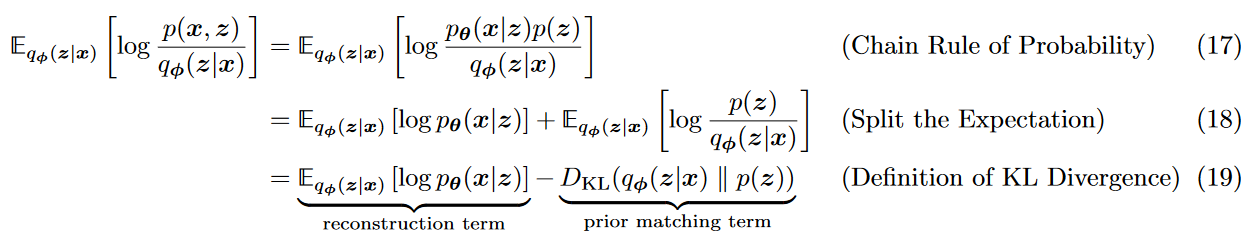

- ELBO can be derived from writing and

- applying Eq.1, multiply by , apply Jensen Inequality (not very informative)

- multiply by , applying Eq.2, multiply by , split the expectation using the log, and arriving at

- KL divergence always

- Conclusion: ELBO proxy is as good as the KL divergence between the approximate posterior and the true posterior

- ⇒ Maximizing the ELBO will minimize the KL divergence

Variational Autoencoders

- In the default formation of the VAE, we directly maximize the ELBO.

- It’s called variational because we optimize for the best amongst a family of potential posterior distributions parameterized by

- It’s called autoencoder because it follow the usual auto-encoder architecture

- In practice, there’s thus two sets of parameters optimized for the encoder and for the decoder.

- Let’s see how maximizing the ELBO makes sense in this context:

- is a deterministic function (decoder) to convert a given latent vector into an observation . This explicitly assumes a somewhat deterministic mapping between x and z.

- can be seen as an intermediate bottlenecking distribution (encoder)

How to train

- A defining feature of the VAE is how the ELBO is optimized jointly over parameters and .

- The encoder of the VAE is commonly chosen to model a multivariate Gaussian with diagonal covariance, and the prior is often selected to be a standard multivariate Gaussian

- We learn the bottleneck mean and covariance

Maximizing ELBO

- KL divergence can be computed analytically

- Reconstruction term through Monte Carlo

- Objective:

- IMPORTANT where the latents are sampled from for every sample in the dataset i.e. making sure that going x → z → x is a good reconstruction

- the KL divergence ensures that the posterior maps to a well-behaved distribution, ensuring that we’ll be able to sample from to create samples later on. (obviously also ensures that we’re maximizing the ELBO)

Reparameterization trick

- The latents that are obtained from going through the encoder and then sampling need to be differentiable because they are passed on the decoder for the reconstruction

- To ensure this, each is computed as a determinstic function of the input and auxiliary noise

- (in theory)

- (in practice) with

Encoder

- We can use as a meaningful and useful representation (for latent diffusion!!)

Posterior collapse

What is it

- Posterior collapse occurs when the approximate posterior collapses to the prior irrespective of the input .

- This means that the latent variable carries very little information about the input

- effectively rendering the latent space meaningless.

- In this scenario, the encoder outputs a distribution that is very close to the prior

- making the KL divergence term very small,

- but at the cost of losing meaningful representations of the input data.

Causes

-

Overpowering Decoder: If the decoder is too powerful, it can learn to reconstruct the input data directly from the prior distribution, making the latent variable redundant.

-

High KL Weight: During training, the KL divergence term can dominate the loss function, pushing the posterior to align closely with the prior .

How to address it

- Adjusting the KL Weight: Introducing an annealing schedule where the weight of the KL divergence term is gradually increased during training can help prevent early posterior collapse.

- VAE Variants: Using alternative VAE architectures such as β-VAE, which introduces an adjustable weight on the KL divergence term, or hierarchical VAEs, which have more complex latent structures, can help avoid posterior collapse.

- Structured Priors: Using more complex priors than a simple Gaussian can help in maintaining a meaningful latent space.